|

Very good. Will change that and test it. Thank you for this advanced support.

|

|

|

|

|

IT WORKS! Thank you very much!

|

|

|

|

|

Hi,

Where can I find the update log for the most recent version of CodeProject?

is this something I need to ask for or should it always be available?

I ask because I have not been able to find it anywhere on the site, but I'm sure I didn't look in the right place.

Thanks.

|

|

|

|

|

|

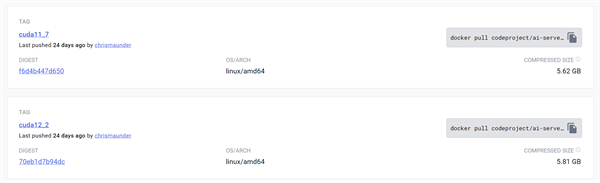

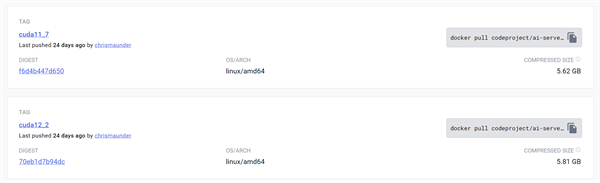

Latest release of GPU tag is

2.2.4 (2 months ago) while latest

2.3.4 is supported for all target platform but GPU.

Was the support for GPU removed?

|

|

|

|

|

There are two tags for GPU Docker now, one for CUDA 11.7 and one for CUDA 12.2

docker pull codeproject/ai-server:cuda11_7

docker pull codeproject/ai-server:cuda12_2

|

|

|

|

|

Hello

I was wondering if I could buy a coral dev board and install codeproject ai on it?

I kind of wanted a dedicated processing unit instead of running it in my vitual machine and using cpu to process.

I would love any input. Thank you very much!

|

|

|

|

|

In theory, that should work fine, but you'd need the 4GB RAM board, not the 1GB.

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

Error in Install LlamaChat: Unable to download module 'LlamaChat' from https:

Status changed to Unknown, but the install button reappears after a while, but not after refresh. I'm thinking there is a refresh every 5 minutes.

Attempted 3 times, and disabled anything that could have interfered.

--

I have another project for LLM called oogabooga. I'm using this currently for API calls to my iOS YourChat app.

If this LlamaChat has an API interface, supports GGML and other formats, then I will be switching to it eventually.

|

|

|

|

|

Hey.

Anyone successful in using an apple m1/m2? Detection times similar to a rtx 3050/60 ?Considering using a headless Mac mini m1/m2 for blue iris.

Any insight would be great.

|

|

|

|

|

I develop CodeProject.AI on, and use my M1 mac Mini all the time. It's not as fast as my RTX 3060, but faster than my windows machine that uses DirectML and integrated graphics. Bang for buck it's brilliant.

I was saving my pennies for a M3 but it seems like an M2 is probably the best price point right now.

cheers

Chris Maunder

|

|

|

|

|

Nice. I seen a few post you made about it. That’s what got the wheels turning. I’ve got my Blue iris rig that’s a space heater in the media room. Granted it doesn’t pull too much watts. 120 or so with a 12400/3060. Tried the m.2 coral, wasn’t to happy with the result. Maybe today I’ll load up VMware and install win11ti run 80/MP/s of blue iris and install codeproject ai on MacOS side of my M2 mini and see how it runs.

|

|

|

|

|

I tried running Windows 11 using Parallels on the Mac mini M1 a couple of years ago but it was still dog slow. I'd be curious as to what you're seeing with VMWare.

cheers

Chris Maunder

|

|

|

|

|

Hi. I've installed CUDA 11.7 (full install which also installs nVidia graphics driver), cuDNN (via provided batch file), latest CodeProject.AI Server v2.3.4, the OCR module with GPU enabled, and tested on some simple text images. Unfortunately, I'm finding that OCR text conversion is slower with GPU enabled. I'm assuming the RTX3060 is supported with the installed PaddleOCR components. Any help resolving this issue is greatly appreciated.

Here is info from the System Info tab:

System: Windows

Operating System: Windows (Microsoft Windows 11 version 10.0.22621)

CPUs: 12th Gen Intel(R) Core(TM) i3-12100F (Intel)

1 CPU x 4 cores. 8 logical processors (x64)

GPU: NVIDIA GeForce RTX 3060 (12 GiB) (NVIDIA)

Driver: 516.01 CUDA: 11.7.64 (max supported: 11.7) Compute: 8.6

System RAM: 16 GiB

Target: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

.NET framework: .NET 7.0.10

App DataDir: C:\ProgramData\CodeProject\AI

Video adapter info:

NVIDIA GeForce RTX 3060:

Driver Version 31.0.15.1601

Video Processor NVIDIA GeForce RTX 3060

Here is results of OCR module with GPU enabled (from log):

2023-11-19 09:36:22: Client request 'ocr' in queue 'ocr_queue' (#reqid 454dd621-f0b6-4542-b509-104af7184165)

2023-11-19 09:36:22: Optical Character Recognition: Retrieved ocr_queue command in Optical Character Recognition

2023-11-19 09:36:22: OCR_adapter.py: [2023/11/19 09:36:22] ppocr WARNING: Since the angle classifier is not initialized, the angle classifier will not be uesd during the forward process

2023-11-19 09:36:26: Optical Character Recognition: Rec'd request for Optical Character Recognition command 'ocr' (#reqid 454dd621-f0b6-4542-b509-104af7184165) took 3224ms (command timing) in Optical Character Recognition

2023-11-19 09:36:26: Response received (#reqid 454dd621-f0b6-4542-b509-104af7184165): 2 pieces of text found

And here is results of OCR module with GPU disabled (from log, same image):

2023-11-19 09:40:49: Request 'ocr' dequeued from 'ocr_queue' (#reqid 2c0cf1ab-9680-43ce-b213-03413deca7a1)

2023-11-19 09:40:49: Client request 'ocr' in queue 'ocr_queue' (#reqid 2c0cf1ab-9680-43ce-b213-03413deca7a1)

2023-11-19 09:40:49: Optical Character Recognition: Retrieved ocr_queue command in Optical Character Recognition

2023-11-19 09:40:49: OCR_adapter.py: [2023/11/19 09:40:49] ppocr WARNING: Since the angle classifier is not initialized, the angle classifier will not be uesd during the forward process

2023-11-19 09:40:49: Optical Character Recognition: Rec'd request for Optical Character Recognition command 'ocr' (#reqid 2c0cf1ab-9680-43ce-b213-03413deca7a1) took 211ms (command timing) in Optical Character Recognition

2023-11-19 09:40:49: Response received (#reqid 2c0cf1ab-9680-43ce-b213-03413deca7a1): 2 pieces of text found

Note that with GPU enabled, OCR time took 3224ms, whereas with GPU disabled it took 211ms.

|

|

|

|

|

The first text read will be slow because the OCR models are being loaded. Does the speed get faster for a second read using a GPU?

|

|

|

|

|

Yes, that's it Mike. Thanks for that! On 2nd and subsequent reads I'm getting in a range of 37-62ms. This is great! Now, how would I run regular PaddleOCR python scripts in this OCR virtual environment? For example..

# imports

import cv2

from paddleocr import PaddleOCR

ocr = PaddleOCR(lang='en', use_gpu=True, enable_mkldnn=True, use_angle_cls=False, table=False, layout=False, show_log=False)

result = ocr.ocr('test.png', cls=False, det=True, rec=True)

print(result)

|

|

|

|

|

|

Thanks Mike. I'm doing some testing running PaddleOCR in AI Server's OCR virtual environment instead of installing my own.

Running on a Win10 Surface Book2 laptop with no CUDA/cuDNN, I'm have no issues running PaddleOCR code using a test script:

- OCR module installed using requirements.txt (paddlepaddle==2.5.1, paddleocr)

- "C:\Program Files\CodeProject\AI\modules\OCR\bin\windows\python37\venv\Scripts\python" test.py

- ocr = PaddleOCR(lang='en', use_gpu=False, enable_mkldnn=True, use_angle_cls=False, table=False, layout=False, show_log=False)

result = ocr.ocr('test.png', cls=False, det=True, rec=True)

However, running the same script on a Win11 PC with CUDA/cuDNN, I'm getting an error as follows:

- OCR module installed using requirements.windows.cuda11_7.txt (paddlepaddle-gpu==2.5.1.post117, paddleocr)

- "C:\Program Files\CodeProject\AI\modules\OCR\bin\windows\python37\venv\Scripts\python" test.py

- ocr = PaddleOCR(lang='en', use_gpu=True, enable_mkldnn=True, use_angle_cls=False, table=False, layout=False, show_log=False)

result = ocr.ocr('test.png', cls=False, det=True, rec=True)

- ImportError: cannot import name 'PaddleOCR' from 'paddleocr' (unknown location)

Any idea what is causing this error? It appears to be related to the paddlepaddle CPU vs GPU package installed.

|

|

|

|

|

If CodeProject.AI is install correctly and the server is running all you need is the below code to OCR an image

import requests

image_data = open("test.png","rb").read()

response = requests.post("http://localhost:32168/v1/image/ocr",

files={"image":image_data}).json()

print(response)

|

|

|

|

|

Mike, this is fantastic. Thank-you! It's interesting -- if I run the CodeProject.AI python code you provided above, the OCR is blazing fast on my PC with supported nVidia card. However, if I run the standard PaddleOCR code via the CodeProject.AI virtual Python (PaddleOCR use_gpu=True), the OCR is incredibly slow. The CodeProject.AI server is doing some serious magic to get GPU OCR working.

PS - regarding the "ImportError: cannot import name 'PaddleOCR' from 'paddleocr' (unknown location)" error I reported earlier, the solution for me was to go to advanced security properties for the 'C:\Program Files\CodeProject\AI\modules\OCR\bin\windows\python37\venv\Lib\site-packages' folder and select 'replace all child object permissions…'. For some reason, the CodeProject.AI installer was restricting permissions on this folder.

modified 29-Nov-23 13:46pm.

|

|

|

|

|

I am using CPAI with Blue iris. I see that I can see the custom models for BI under the IRIS settings that are available. By default it looks like there are

actionnetv2.pt

ipcam-animal.pt

ipcam-combined.pt

ipcam-dark.pt

ipcam-geeneral.pt

license-plate.pt

In blue iris, each camera can have custom models used for the AI alert. On most of my cameras, I don't need to know if there is a license plate as they more for people. Can I just list the ones that I want that camera to use and thus not use the license-plate.pt?

Do I list them in a string with comas between them and do I include the extension (.pt)?

actionnetv2.pt,ipcam-animal.pt,ipcam-combined.pt,ipcam-dark.pt,ipcam-geeneral.pt

Is there a way to use boolean operators in this area so if I want to use all the models EXCEPT the license plate model I could exclude it?

Is there a place I can get a general idea of what each is used for so I can know if I should include it?

Lastly if this is better posted in another section of forum, please let me know.

Thanks

modified 20-Nov-23 11:33am.

|

|

|

|

|

See the below screenshot from Blue Iris Help file

If you just want to use the license-plate model

objects:0,license-plate

If you just want to use the license-plate and ipcam-general model

objects:0,license-plate,ipcam-geeneral

|

|

|

|

|

Thanks so much. I found the help page you referenced.

Would you know if these can be set globally? For example. Right now BI shows these all as being used

actionnetv2.pt

ipcam-animal.pt

ipcam-combined.pt

ipcam-dark.pt

ipcam-general.pt

license-plate.pt

If I want to only use ipcam-combined and license-plate where globally can I put the object:0 to skip this? If this is should be asked in a BI forum, I can do it there. Thank you.

|

|

|

|

|

To disable objects globally uncheck Default object detection

|

|

|

|

|

Thanks so much. This has helped me get this set up correctly. I had to set each camera to the specific custom model and confirmed that CPAI is using these. It very much decreased the load on my GPU and CPU

modified 20-Nov-23 11:33am.

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin